What are the pros and cons of cloud backup?

Know which type of cloud backup you want to use and the advantages and disadvantages that come with cloud backups before you trust your data to a third-party provider.

Users everywhere have embraced cloud-based services for a variety of activities, and perhaps the most popular is cloud backup. Considering how much data is created every hour, a reliable and secure backup option is an important strategy. Fueled by affordable bandwidth and capacity optimization technologies, cloud backup is as popular as portable media such as tape, and disk technology such as flash drives and HDDs. This article will examine the pros and cons of cloud backup and offer guidance on selecting a cloud service provider.

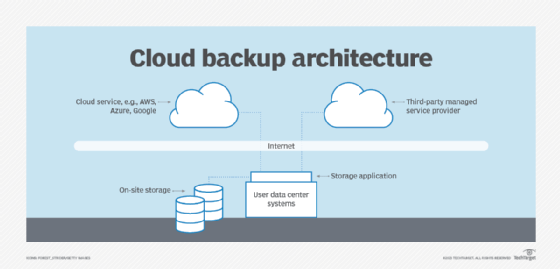

Backing up data, databases, files and applications is an essential component of technology DR. This ensures that mission-critical data and systems are available should primary storage platforms become disabled or damaged. Several options for cloud backup are available, including: backing up data directly to a public cloud, such as AWS, Microsoft Azure or Google Cloud; backing up data to a private cloud (e.g., managed by the user); using a third-party MSP to host and manage backups to its cloud service; and cloud-to-cloud backup for data created in SaaS apps, such as Microsoft 365 and Salesforce.

Cloud computing and backup services can be a blend of on- and off-premises components. For example, an IT department could have on-premises control of backup software and, optionally, storage array hardware. This is coupled with off-premises services and/or infrastructure -- e.g., large data centers housing powerful computer, network and storage resources -- to provide additional capacity. Figure 1 depicts a typical cloud backup arrangement.

Cloud backup services are charged back to the customer based on the amount of storage used. Additional pricing factors can include capacity needed, geographic location, network bandwidth used to transmit data or seat -- e.g., number of users accessing the service. There can also be fees for discontinuing the service, for example, so be aware of all possible fees.

This article is part of

What is cloud backup and how does it work?

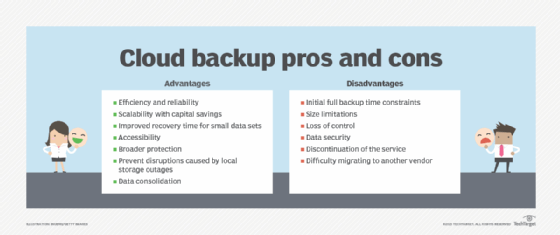

Cloud backup advantages

Several advantages can be realized with cloud backup, including the following:

- Efficiency and reliability. Cloud providers use state-of-the-art technology, such as disk-based backup, data compression, encryption, data deduplication, server virtualization, storage virtualization and application-specific protection that can be identified in SSAE-16 certified data centers. In addition to the due diligence that accompanies their certification, many cloud vendors offer 24/7 monitoring, management, data security in many forms, protection of stored data, reporting and support for disaster recovery activities. Furthermore, users need not worry about technology upgrades, amounts of data stored, migrations or obsolescence, as the service provider addresses all infrastructure issues.

- Scalability with capital savings. Cloud backups can be low-cost, especially for consumers and small businesses without a lot of data to protect. Public clouds also remove scalability issues, so sufficient storage capacity is rarely a problem. Cloud backup management using a service provider is easier because the provider handles management. Moving backups to the cloud can provide an air gap arrangement that shields mission-critical data and systems from cyber attacks such as phishing and ransomware because files are off-site.

- Improved recovery time for small data sets. For a recovery from tape, an operator would need to recall the tape, load it, locate the data and recover the data. Conversely, file recovery from cloud storage is faster; it doesn't require physical transport from the off-site location, tape handling or seek time. Files to be recovered can be quickly located and downloaded over a network connection. This helps reduce the time needed to recover critical data and systems. It also eliminates the need for a local tape storage array. Success for this method depends largely on how much bandwidth is available from a WAN or internet connection.

- Accessibility. Cloud backup can be attractive to organizations that can't afford the investment and maintenance of a separate DR infrastructure. It can also appeal to those who can afford a full DR site but recognize the greater efficiency and cost savings to be gained by outsourcing data resources. Off-site data copies are accessible from virtually any internet-connected device or location. Such resources provide further security and data protection in a disruptive event, such as a regional disaster.

- Broader protection. Based on identified backup requirements and frequency of data retrievals, cloud backups can protect edge devices such as laptops or tablets that aren't part of an on-premises backup arrangement. Cloud repositories can also replace or supplement the need for tape vaulting, based on a cost analysis.

- Prevent disruptions caused by local storage outages. Should a failure in local storage arrays occur, the cloud-located data can be retrieved when the on-premises equipment has been returned to service.

- Data consolidation. Owing to the vast amount of storage capacity available from cloud vendors, users can consolidate their storage assets, from multiple locations locally and globally, further ensuring that a disruption anywhere won't shut down the business.

Cloud backup disadvantages

There are also disadvantages to cloud backup, including the following:

- Initial full backup time constraints. Depending on the total capacity of data to be backed up, the first full backup and/or full recovery of on-site data could prove to be too time-consuming and impactful on production systems. It might be necessary to schedule initial or full backups after hours or over weekends.

- Size limitations. Depending on bandwidth availability, organizations might have a threshold for the amount of data that can be sent daily to the cloud. These limitations can have an effect on backup strategies in which data and applications must be retrieved quickly. Bandwidth used for backups is often a major issue for large IT departments. The amount of data to be backed up can be reduced using various methods such as data deduplication. Backup teams might elect to move only certain data sets to a cloud repository as a way to compensate for bandwidth limitations and reduce costs.

- Loss of control. Users have little to no control over their data assets once they have been moved to a cloud service. Service level agreements (SLAs) are an appropriate way to ensure that data will be available when needed.

- Data security. Once data leaves an organization and enters a cloud service, the user no longer has real-time control of the data. It's up to the user to ensure that SLAs and other provisions are established and enforced to ensure that the cloud vendor will protect the user's data.

- Discontinuation of the service. Understanding the most graceful exit strategy for a cloud service is just as important as comparing specific features. Termination or early withdrawal fees, cancellation notification and data extraction are just a few of the factors to consider. This is less likely to be an issue for large public cloud providers, such as AWS, Azure and Google, but might be an issue for smaller MSPs and regional cloud services.

- Difficulty migrating to another vendor. Similar to discontinuing a service arrangement, fees to transfer data from one cloud backup vendor to another could add to the overall service costs.

Additional considerations with cloud backup

When contracting for cloud backup, organizations typically arrange for backup as a service (BaaS). Such service offerings migrate data between the user location and the cloud service provider's (CSP's) data center. As noted earlier, to optimize network bandwidth and reduce latency, backup data typically is deduplicated and compressed before being transmitted to the CSP. Although this is generally an efficient process, it introduces some challenges when data retrieval and recovery are needed.

First, deduplicated backup data must be rehydrated, or returned to its native format and file structure, before it can be recovered to an application. Although rehydration can take time to complete, the time needed to download large quantities of data -- e.g., VMs, databases, applications -- from the CSP to the user can be considerable, and can be inconsistent with previously approved recovery time objectives.

Addressing this challenge is an offering called disaster recovery as a service (DRaaS). Among the options possible with such an offering, users might be able to run key business applications as VM instances in the vendor's cloud. Although this can save valuable production time, the user must ensure that the application will run adequately in the provider's cloud.

In DR situations where a cloud-based interim production environment is created, additional costs can be incurred for high-speed storage resources or sufficient network bandwidth to facilitate hundreds and maybe thousands of concurrent user sessions. In such situations where users are suddenly dependent on the CSP this can introduce heretofore unknown variables that could affect quality of service.

Cloud backup vendors

Numerous firms offer a variety of cloud backup services. As with any venture that takes an IT department outside the four walls of its infrastructure, due diligence is required. The following are examples of questions to ask when evaluating vendors:

- Does the vendor offer SLAs?

- Will the vendor adapt its SLAs to user requirements?

- What different backup methods does the vendor support?

- How scalable are storage resources?

- What DR testing services are offered?

- What network bandwidth resources are available from the vendor?

- Will vendors permit users to periodically test and verify their data?

- What is the vendor fee structure, and what different charges can be contracted?

- How will the vendor manage a user's data, databases and applications?

- How will the vendor ensure that backed-up data is protected, e.g., encrypted?

- What kinds of reports does the vendor provide to users?

- How do vendors ensure compliance with regulations such as HIPAA and GDPR?

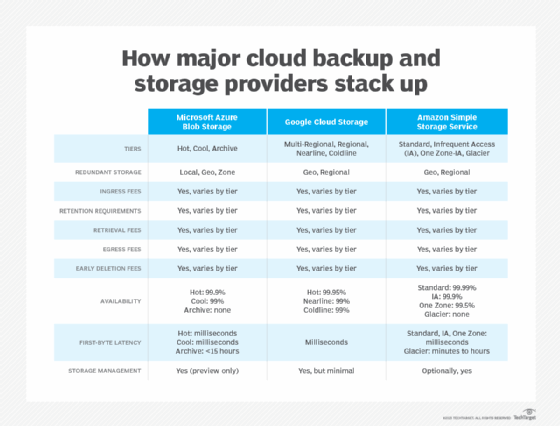

Table 1 compares the leading cloud backup vendors based on several important factors.

In addition to AWS, Microsoft Azure and Google Cloud, many other vendors offer cloud backup, including the following:

- Acronis offers Cyber Backup Cloud, a hybrid cloud BaaS product.

- Arcserve offers Unified Data Protection that includes Cloud Direct DR and backup.

- Asigra has cloud backup features such as software that prevents ransomware from affecting backups.

- Backblaze offers both cloud backup and cloud storage.

- Carbonite provides easy-to-use backup to consumers, SMBs and enterprises.

- Druva offers the Data Resiliency Cloud as a SaaS platform for data backup, DR and cyber resilience.

- IDrive provides a full range of cloud backup services.

- Unitrends offers Cloud Backup as a DRaaS resource.

- Veeam Software provides a variety of cloud backup services including Veeam Backup & Replication.

The use of cloud technology for data storage is hugely popular, and the many options available make it an important choice to most businesses -- regardless of size -- as well as individual users. The many options also mean that prospective users must do their homework, compare offerings and pricing structures, as well as availability of SLAs, when making an informed decision.