Getty Images/iStockphoto

Data center security compliance checklist

In five steps, create a security compliance plan for your data center. Discover different standards, learn audit schedules and develop procedures to align with compliance.

Data centers must demonstrate compliance with industry-standard guidelines. This quick checklist can help data centers develop data compliance strategies to ensure the security of their customers' data and maintain high operational standards.

Data centers are responsible for ensuring secure data handling on behalf of an organization's customers. A single data outage or breach can devastate the business that relies on that data, but it can also be catastrophic for a data center facility.

An effective compliance strategy can help any data center secure the sensitive data it handles. The compliance strategy then becomes the foundation for highly available service delivery and drives long-term customer satisfaction.

Facilities intending to create a data center compliance strategy can use this checklist as a starting point.

1. Align data center and IT teams

Data security often resides with interested or affected groups within the organization. True data center data compliance requires alignment across an entire company. Data center administrators must align or liaise with customer compliance teams to ensure full compliance and coverage.

Admins should get agreement from senior leaders in relevant teams and explain how the relationship between departments works. They should define what role each team and each member plays in the strategy. This transparency increases the chances of acceptance and sustains adherence to the processes and procedures.

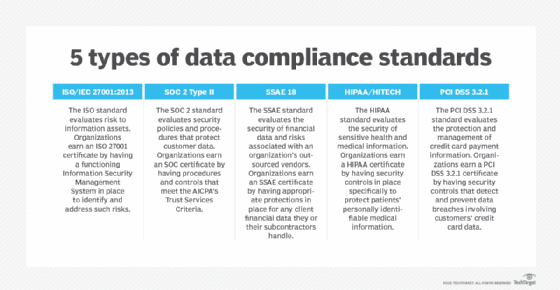

2. Discover compliance options

Different compliance standards have different guidelines. If a data center handles healthcare data, for instance, it must be HIPAA certified and demonstrate compliance with them for patient privacy. If it handles e-commerce data -- such as online stores or financial transactions of any kind -- it must comply with PCI DSS 3.2 standards to protect transmitted financial data, such as credit card information.

3. Learn compliance audit schedules

Data centers learn to continually scrutinize their operations and infrastructure. Small audits and updates of ongoing daily processes maintain operational readiness and in-depth, intense audits verify data compliance. Third-party external auditors conduct most compliance audits annually, which means if a facility has multiple certifications, it requires multiple yearly audits.

Both data center staff and customers must know the schedule for these audits because they can impact regular facility operations. An organization must include this information in any service-level agreement in customer contracts to ensure operational transparency.

4. Understand compliance proof

Data centers can demonstrate their compliance by publishing the certificates and certifications they receive. What they should publish depends on the specific audit guideline. Third-party auditing services award these certificates on behalf of the governing body and regularly assess the data center's operations and infrastructure.

The certifications data centers require depends on their customers and specific compliance guidelines, so organizations should ensure they stay updated.

5. Develop procedures to align with compliance rules

Data center staff must align their procedures with the compliance rules they follow because compliance audits happen regularly. Example processes and procedures include:

- Security gap identification. Data center admins should do an inventory of the network to uncover any security risks, vulnerabilities and exposures.

- Physical security review. Facility staff should verify physical access control of devices in the facilities. They should also install surveillance cameras and other monitoring equipment.

- Incident management. Data center staff should document the process, procedures, roles and involved staff for incident management. This includes responses and remediation efforts during an incident.

- Training processes. Managers should ensure initial training for all staff, onboarding training for new staff and ongoing training for everyone. They should emphasize employee reporting procedures so data center admins can learn how to report nonconformance.